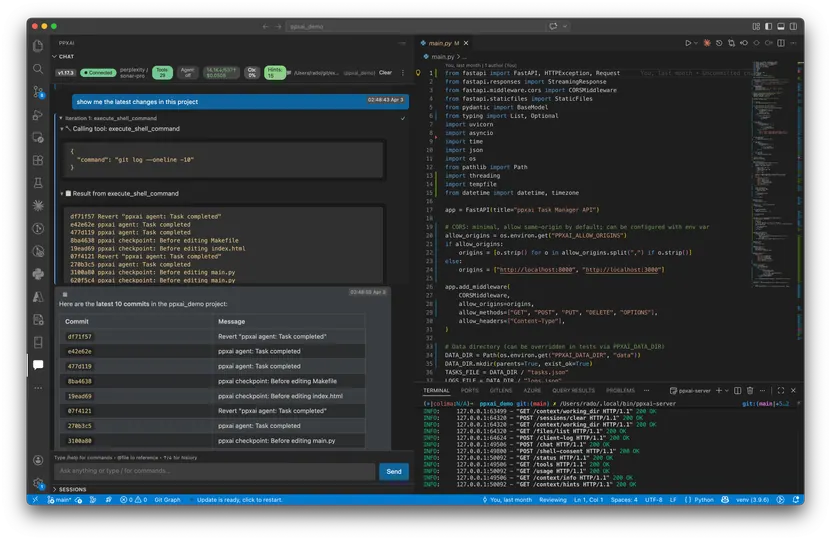

Open-source multi-LLM interface for developers. TUI + VSCode extension. Zero vendor lock-in. Use Perplexity, Gemini, OpenAI, OpenRouter, or local models.

Copilot says:

AI-generated

Say goodbye to vendor lock-in and hello to freedom with this open-source AI buddy that lets you chat with any large language model right from your terminal, desktop, or VSCode—switching models mid-session is a breeze! It’s like having a Swiss Army knife for AI coding that’s flexible, wallet-friendly, and totally transparent.

Key features:

- 🔄 Seamlessly switch between multiple LLM providers mid-session

- 🖥️ Use through terminal, desktop web app, or VSCode extension

- 💸 Mix local models and free tiers to save on API costs

- 🛠️ Fully open source and self-hostable

This summary was generated by GitHub Copilot based on the project README.